DETACT

A speculative tactile navigation concept for people with visual impairment

Field

Invention Design

Team

Jannes Daur, Leon Burg, me

Contribution

Research, Ideation, Concept, Product Design, User-Testing

Context

study project, third semester

Duration

four weeks

Year

2023

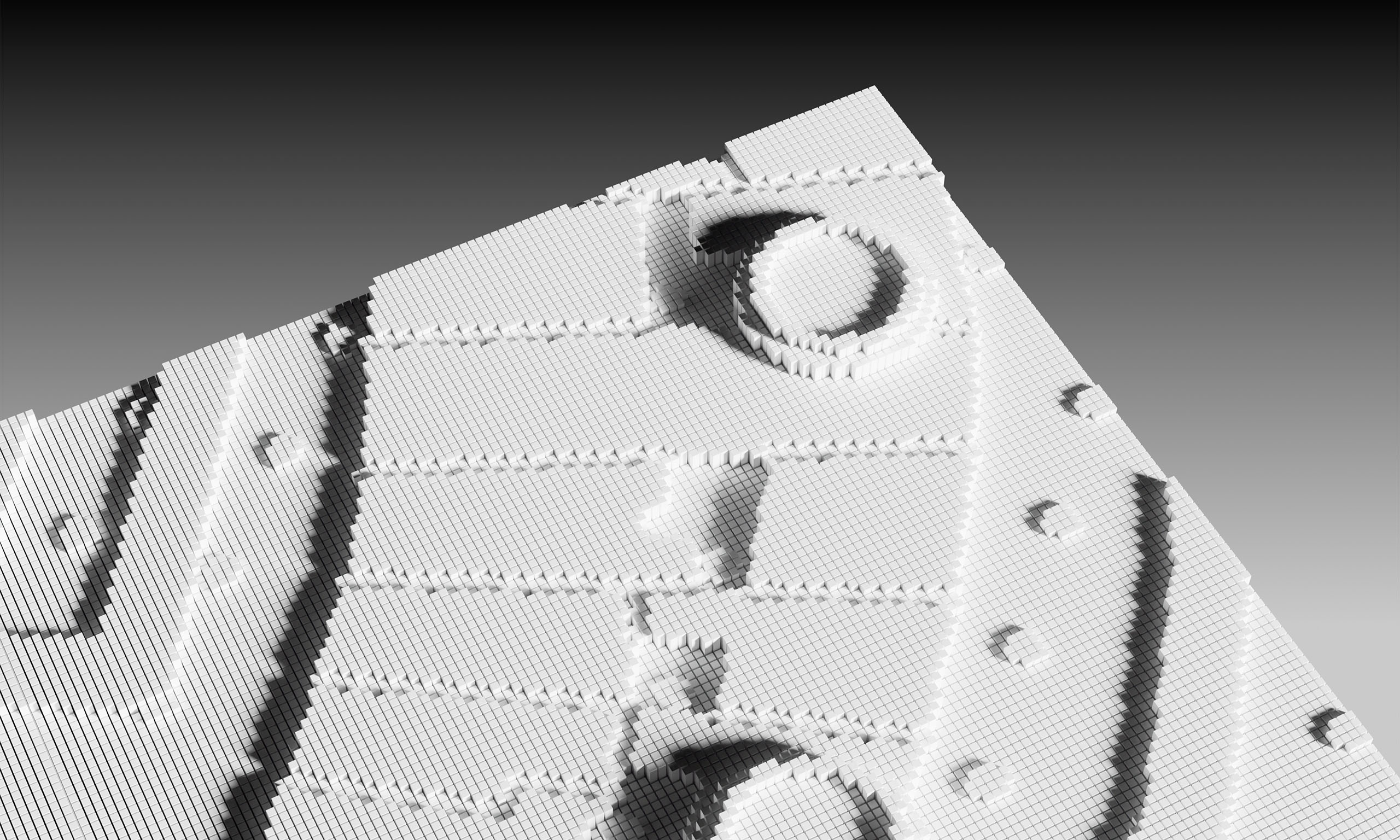

Detact is a speculative concept for a tactile map system designed to help blind people explore and understand places. It is based on an innovative shape-changing display that makes information tangible.

Problem

Navigating a foreign city is challenging. Modern map applications have reduced this struggle. For the visually impaired, routing functions and voice output make simple navigation from point A to B possible. This however, does not convey the vast amount of information that sighted people gain while using digital maps.

Idea

The concept is based on inFORM, a research project at the MIT Media Lab. The team developed a dynamic shape display comprised of individual pins adjustable in height, which can render 3D content physically. Detact speculatively projects this tactile display technology into the future und places it in a context of use. We hypothesise that the resolution of pins will increase tremendously in the future and could be implemented into a portable device.

All the map information that is not conveyed via voice output in navigation apps can be provided with the shape-changing display. Any type of information such as the user’s position, streets and points of interest would be haptic. Unlike analog tactile maps, Detact could be used flexibly in any location, with different scales and levels of information.This could make it easier for people with visual impairment to actively explore and comprehend their surroundings.

Process

Research

After various analyses of maps for sighted and visually impaired people, we agreed on a minimal viable product for Detact. It should include different areas (downtown, industrial, etc.), lines (streets, rivers, etc.), buildings, points of interest and a position marker. The reduction makes it easier to distinguish the remaining tactile information. We gathered insights on how to design for visually impaired from existing research papers and norms for the next steps.

Exploration

We iteratively worked on the different forms. We tested different approaches to convey information for the points of interest, including braille marks, or symbolic icons. In the end, we decided on an abstract series of shapes that were created in the context of a research project in the 1970s. Here, abstract shapes such as a circle represent a gastronomy. This shape has nothing to do with its meaning, but the tactile marker can now be clearly identified and distinguished from each other and further elements of the map because of their simple design.

Result

After further test series for areas and routes, we developed a modular system for constructing the 3D models of our tactile maps.

As an application example, four maps of the same location were created with three different scales and with different information layers.

User Testing

We tested our project with a small group of visually impaired individuals and received a lot of positive feedback. All participants confirmed that Detact would be helpful in their daily lives and expressed a desire to see the project realised in the future. Almost all information was clearly distinguishable and learned surprisingly quickly by experienced testers who had been blind for a longer period.

Conclusion

Designing without conventional visual elements such as typography and colors proved to be a significant challenge. However, through the final testing, we were able to verify our success. Overall, the concept has the potential to create value for society.

NEXT PROJECT BELOW

AWARE UI

An interface for natural interactions

Field

Interface Design

Team

Malte Fial & me

Contribution

Research, Ideation, Concept, UI-Design, Sound-Design

Context

study project, third semester

Duration

3 weeks

Year

2023

Aware UI is an innovative interface concept based on Machine Learning that enables natural interaction. The system combines touchscreen and voice control to create a way of interaction that takes human gestures, speech and non-verbal communication into account to provide a familiar and user-friendly experience.

Objective

The goal of the project was to provide a new type of communication with an interface that comes closer to natural communication between people and is directly derived from it. Natural actions and reactions like focusing on the other person, eye contact, listening should be transferred.